Networks Are Graphs, Not Language Problems: A Look at NetAI’s GNN Approach

A few weeks ago at the 40th edition of Networking Field Day, one presentation stood out not because it promised “AI for networking,” but because it challenged some of the assumptions the industry has recently made about how AI should actually be applied to network operations.

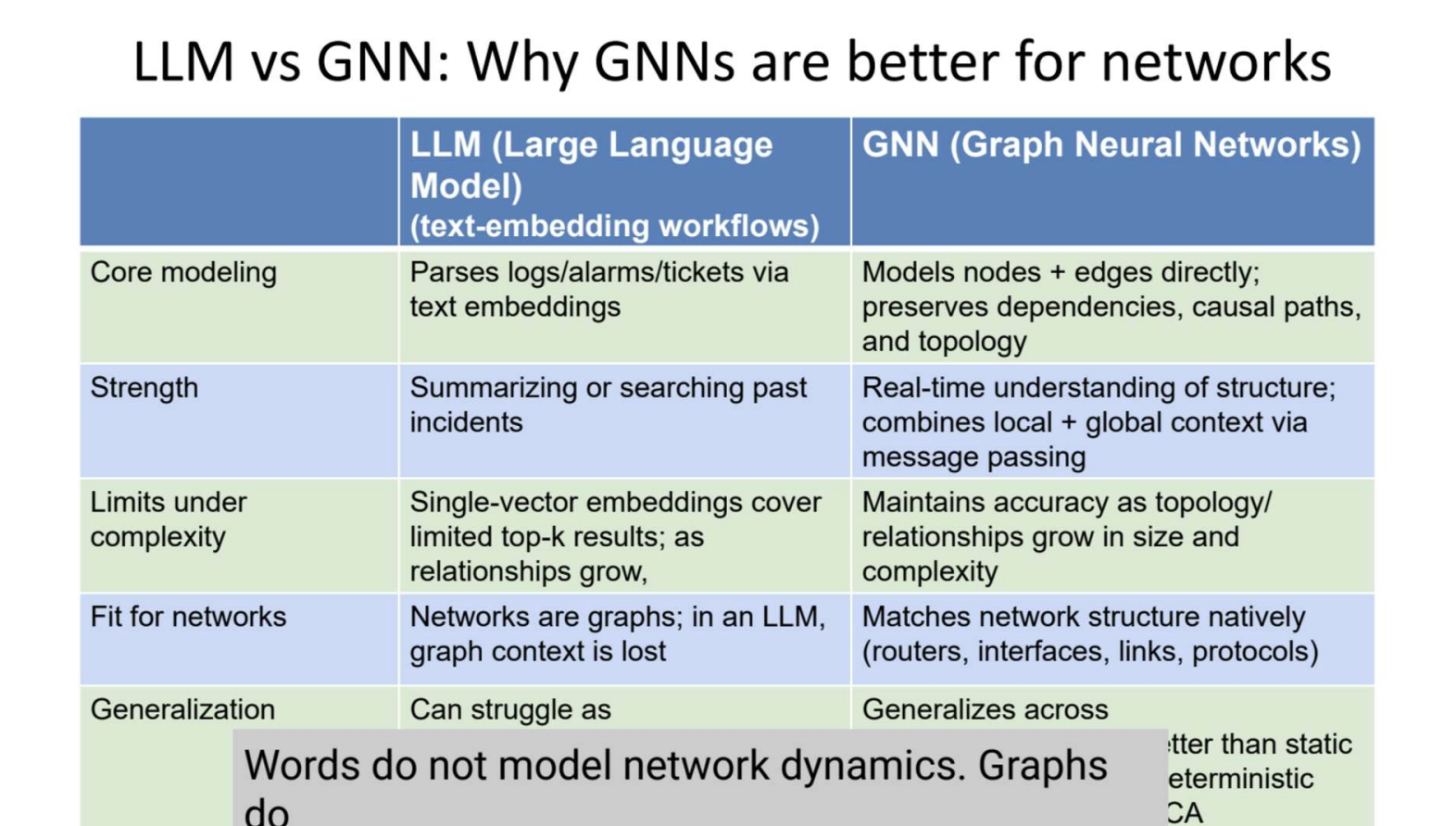

At a high level, NetAI is focused on deterministic root cause analysis for network operations using Graph Neural Networks (GNNs), not Large Language Models (LLMs). Their core argument is straightforward: networks are graphs, not language problems. Therefore, a system designed specifically to model relationships between nodes, links, protocols, and dependencies may be fundamentally better suited for troubleshooting and operational reasoning than systems optimized for predicting words.

I think that distinction matters more than many people realize.

The current AI wave in networking has largely revolved around bolting LLMs onto observability stacks. In fairness, I believe there’s real value there. LLMs are excellent at summarization, documentation lookup, conversational interfaces, and accelerating operational workflows. But NetAI’s presentation highlighted an uncomfortable truth that many experienced network operators already know, namely that most current “AIOps” systems still leave the hardest part to humans, or in other words, determining whether the suggested root cause is actually correct.

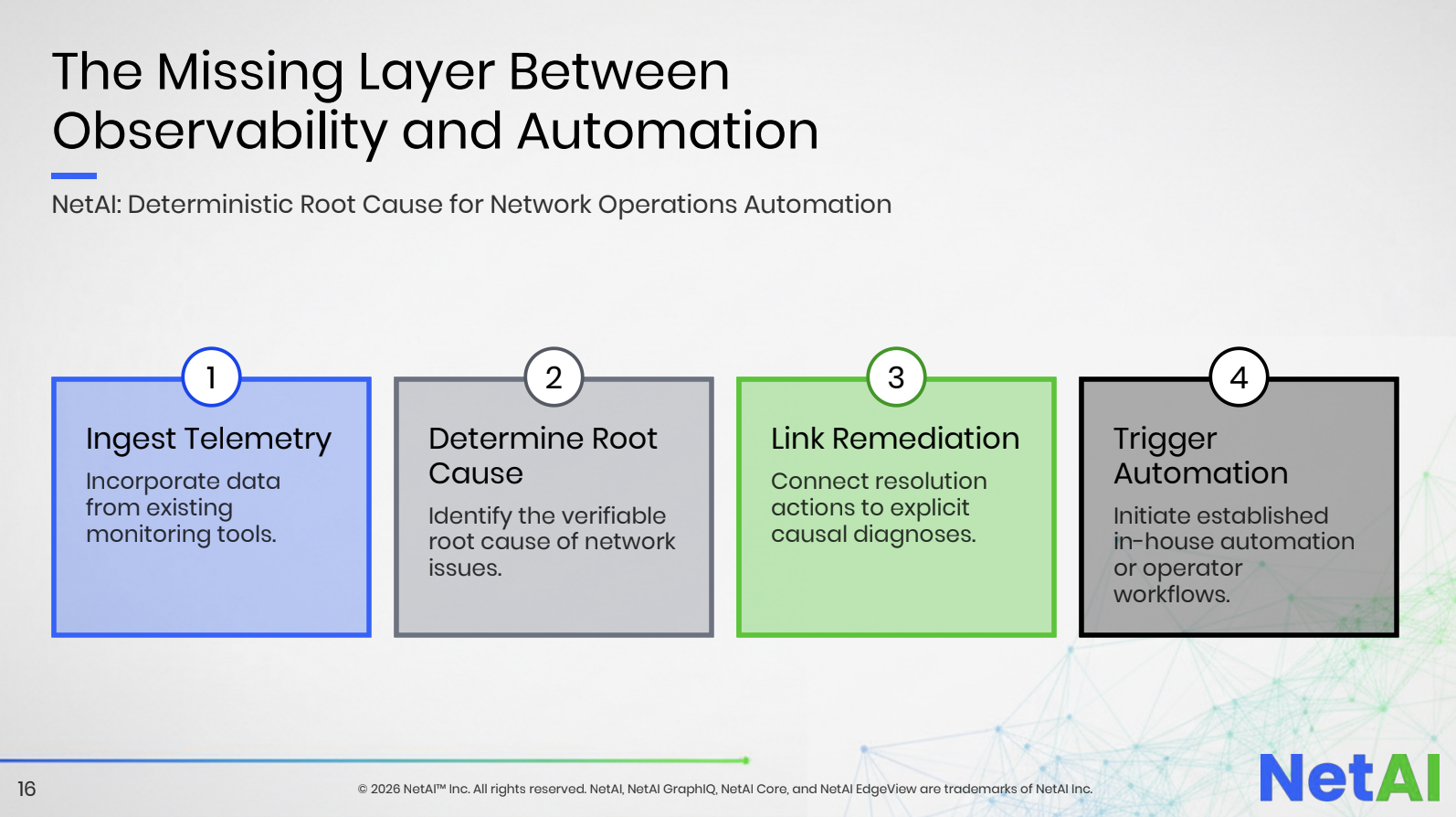

NetAI’s thesis is that autonomous operations can’t truly exist without deterministic and operator-verifiable root cause analysis (image left). Their platform positions itself as the missing layer between observability and automation. Existing telemetry, logs, topology data, alarms, and configurations are ingested from the tools organizations already own. From there, NetAI attempts to identify causal relationships, determine root cause, correlate downstream alarms, and even trigger remediation workflows or automation systems.

They aren’t positioning themselves as another observability vendor demanding a rip-and-replace migration, which I think is very important to note. Their architecture is intentionally designed as an overlay on top of existing operational tooling. And frankly, that operational positioning is strategically smart.

Network operations teams today are drowning in telemetry but starving for causality. Modern infrastructures generate enormous volumes of metrics, logs, events, flow records, synthetic test results, and topology data. The problem is no longer data collection. Instead, the problem is determining which event actually matters.

Anyone who has worked in a large enterprise or service provider environment understands the reality NetAI described during their presentation (which you can watch here). A failure occurs, alarms explode across multiple systems, tickets flood the NOC, escalation chains kick off, and engineers spend hours manually validating whether the apparent symptoms are actually the root cause. Clue chaining at its finest!

This becomes even more problematic in AI-era infrastructure.

Modern distributed systems are increasingly dynamic, multi-vendor, software-driven, and highly interconnected. East-west traffic patterns, overlay abstractions, rapidly changing application dependencies, and increasingly autonomous operational systems create environments where causal reasoning becomes dramatically more difficult for humans to do alone. Traditional event correlation engines often struggle because correlation is not causation.

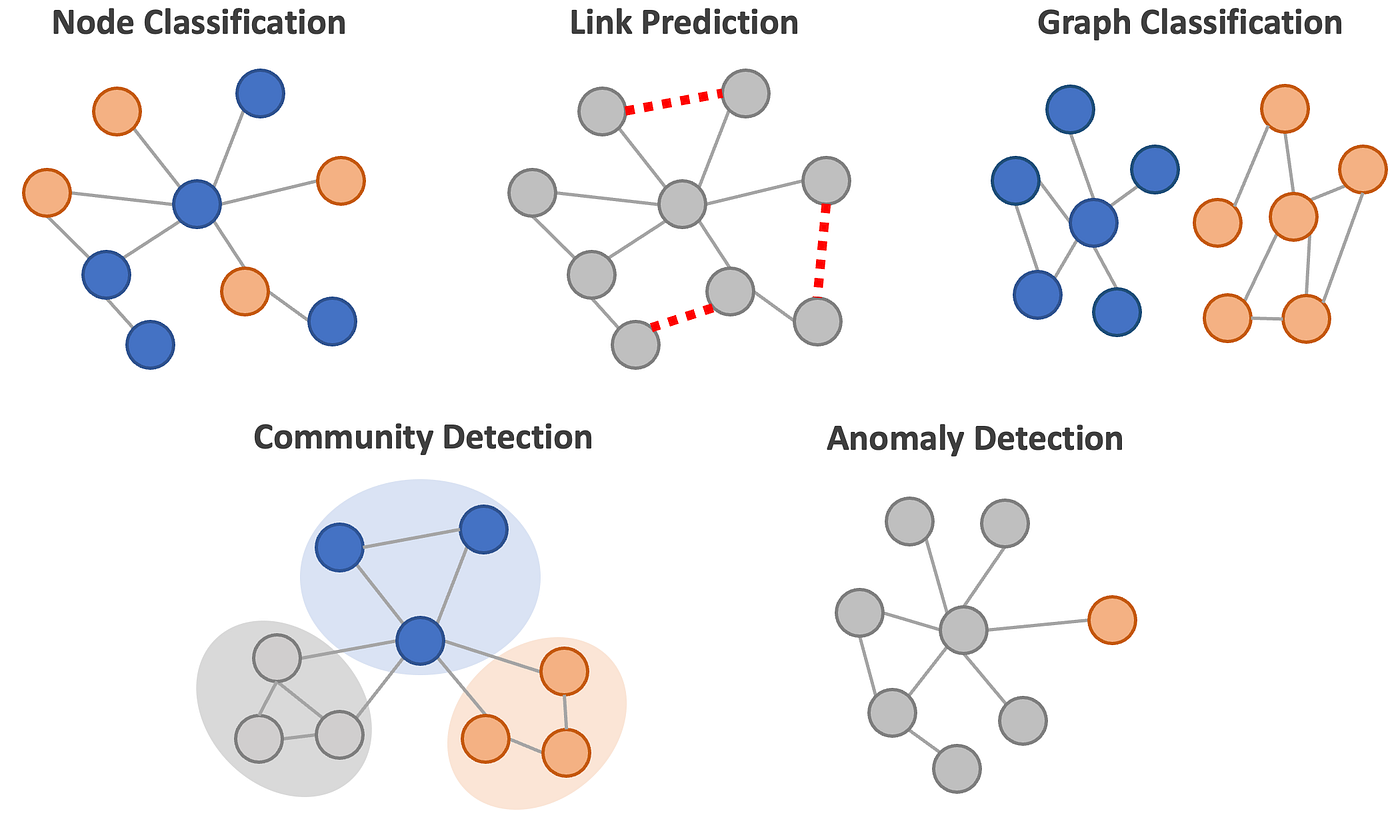

This is where Graph Neural Networks become interesting.

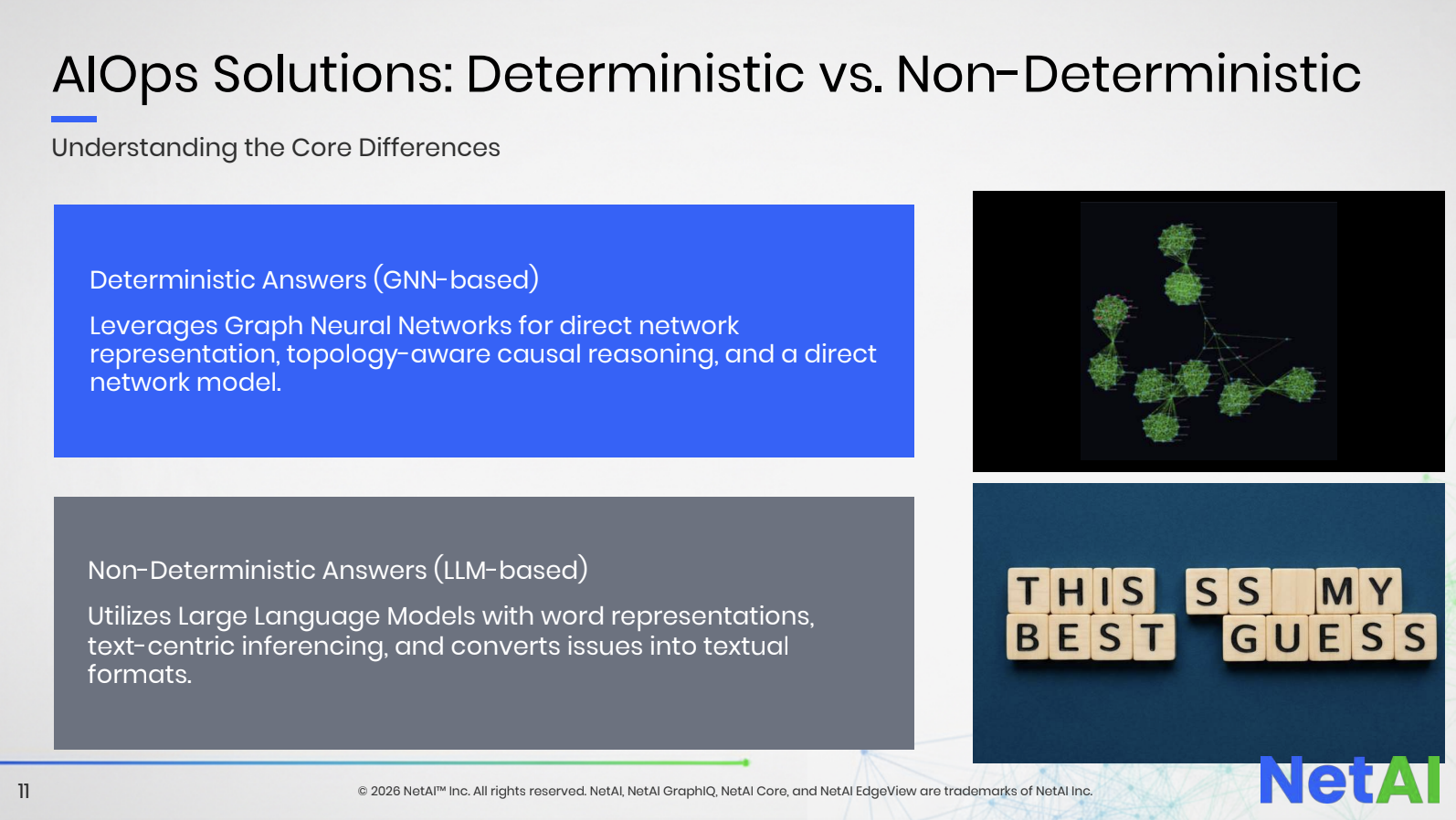

If you’re unfamiliar with GNNs, they’re basically a type of machine learning model specifically designed to operate on graph structures. In the image on the right, you can see how NetAI differentiates between deterministic and non-deterministic AI models.

Specifically, LLM-based models are non-deterministic leading to less predictable outcomes. By definition, non-deterministic means a system that can produce different results from the same input even under the same starting conditions. For example, when one enters the same prompt into ChatGPT, Claude, etc, over and over, the result will be slightly different each time.

This is unlike deterministic systems, which behave consistently. GNN-based models, by virtue of mathematically identifying relationships among nodes, provide direct network representation and causal reasoning.

Unlike traditional neural networks that work well with images or sequences, GNNs process relationships between connected entities. In networking terms, routers, switches, interfaces, protocols, paths, and dependencies naturally form graph structures. And that matters because a network is fundamentally defined by relationships, as you can see in the image on the left of various GNN relationships and uses.

A routing adjacency matters because of what it connects to. A link failure matters because of the topology around it. A congestion event matters because of its upstream and downstream dependencies. GNNs are designed to learn and reason across those relationships directly.

(You can watch NetAI’s deep dive into GNNs for networking here.)

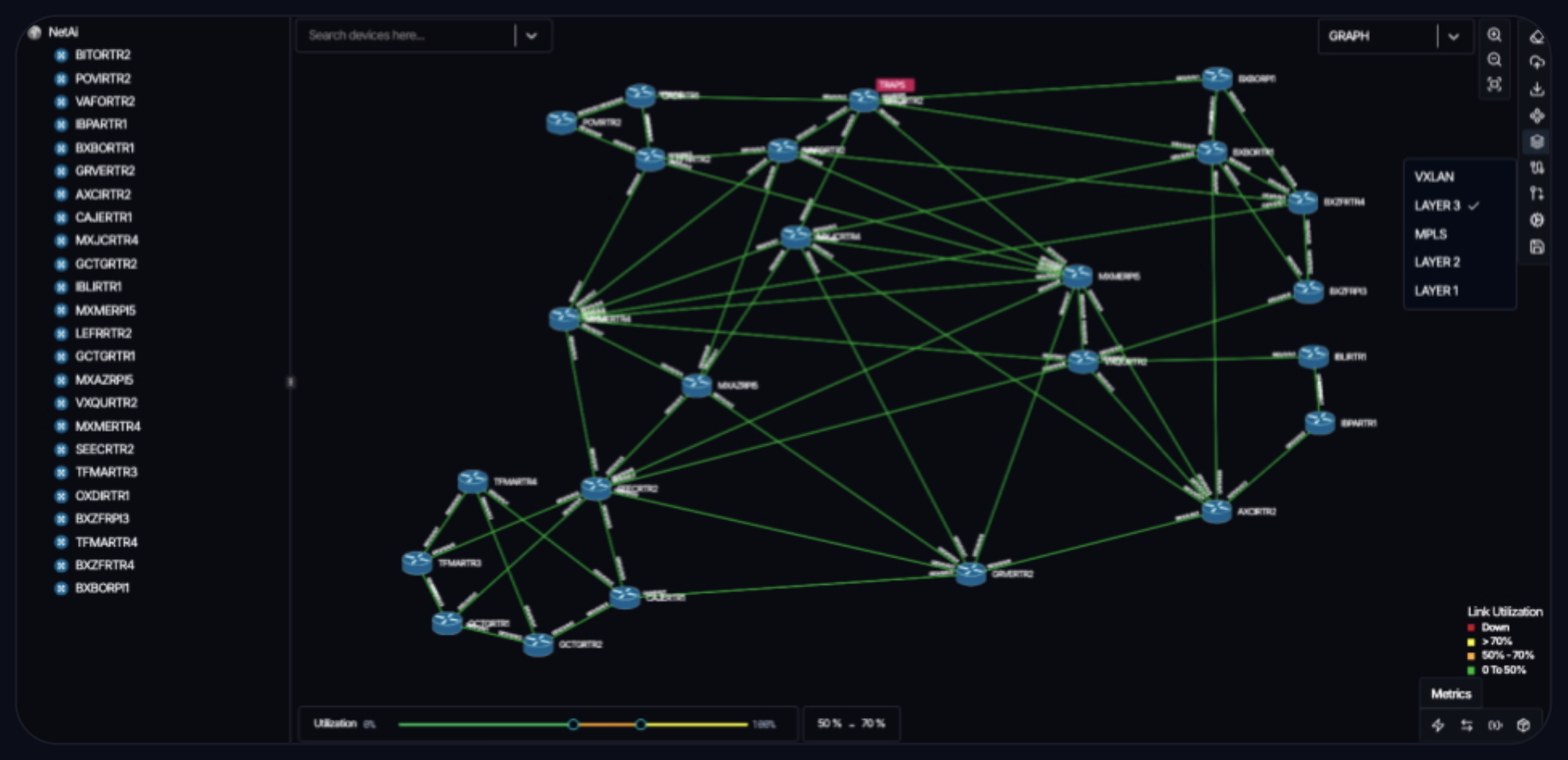

In the image on the right from the NetAI platform, notice how the generated topology looks very much like the example GNN images above.

NetAI repeatedly emphasized this distinction during their presentation. (By the way, it really was a pleasure to be back as a delegate for NFD40). Their argument was not necessarily that LLMs are “bad,” but rather that LLMs were built to model language and probabilistic text relationships, whereas networks already have deterministic structural models.

That’s a subtle but very important point.

The networking industry has become understandably excited about generative AI, but many vendors are currently using LLMs as universal hammers for every operational nail. NetAI is taking a more domain-specific approach. Instead of asking an LLM to infer network state through textual descriptions and embeddings, they’re directly modeling topology and dependencies themselves.

Whether their approach ultimately proves superior at scale remains to be seen, but conceptually I can’t deny that it’s compelling.

What also stood out during the presentation was that NetAI was refreshingly opinionated. In an industry where nearly every AI product currently sounds interchangeable, they clearly articulated a differentiated architectural position based on their belief that GNNs solve the probabilistic problem of LLMs (screenshot below). They were willing to challenge prevailing assumptions instead of simply adding “copilot” branding to existing monitoring platforms (can you say “Clippy for your network?”)

That confidence appears at least partially validated by some early traction. NetAI referenced work involving Google Cloud and MasOrange, including a pilot demonstrating autonomous operational workflows on Google Cloud’s AI stack. They cited reductions in MTTR from hours to under 30 minutes, alert noise reductions, and measurable operational improvements in service provider environments.

Of course, every vendor presentation includes optimistic metrics, and healthy skepticism is always appropriate. But the broader idea they’re pursuing aligns with where the industry needs to go. I really believe that the networking industry doesn’t merely need “more AI.” It needs operational systems capable of reasoning about infrastructure with enough confidence that automation becomes trustworthy. And that trust layer is critical.

Many organizations already possess sophisticated automation frameworks, orchestration platforms, and remediation tooling. What they often lack is confidence in triggering those systems automatically during ambiguous operational events. NetAI’s entire value proposition revolves around increasing confidence in causal diagnosis so automation can safely act.

That’s a far more meaningful operational challenge than simply generating summaries from logs.

In many ways, NetAI represents a broader trend that I expect we’ll see more of over the next several years - specialized AI architectures designed for infrastructure-specific reasoning rather than generic conversational intelligence.

Networking may ultimately become one of the strongest real-world use cases for graph-based AI systems because networks themselves are inherently graph problems.

Networking Field Day 40 featured plenty of discussion around AI infrastructure, observability, automation, and operations, but NetAI brought something slightly different to the conversation. Instead of asking how to add AI to networking, they asked a more important question:

What kind of AI actually understands networks in the first place?